MITRE Engenuity has released the latest round of its ATT&CK endpoint security evaluations, and the results show some familiar names leading the pack with the most detections.

The MITRE evaluations are unique in that they emulate advanced persistent threat (APT) and nation-state hacking techniques, making them different from tests that might look at static malware samples, for example.

Last year, MITRE added Protection evaluations in addition to its Detection tests. While the detection tests are aimed at endpoint detection and response (EDR) tools, protection tests favor endpoint protection platforms (EPP), which are somewhat like traditional antivirus software, except with the greater sophistication that enterprise IT security requires. EDR and EPP tools have been merging over the years, yet they retain distinct functions.

While the MITRE tests are unique in the depth of security information they provide to both buyers and vendors, they come with a number of caveats, as both MITRE and security vendors have noted.

- MITRE doesn’t score results or try to say who “won,” and instead just provides the raw data. It’s up to security buyers and vendors how to use it.

- Vendors provide information on the tools and configuration they used, so buyers can use that info to see if the configuration is relevant to their environment.

- The tests don’t check for false positives, so there’s no disincentive to keep vendors from tuning their tools so they catch everything.

- And automated features are often turned off to allow for certain attack techniques, so the results don’t always reflect a security tool’s full capabilities.

So while the MITRE tests give buyers more data than they might otherwise have, they’re still encouraged to do their own research and testing, just as vendors will use the results to improve security defenses.

But just as vendors spin the results to their advantage, we too will parse the data to try to put some shape to it – with the caveat that you really need to make sure that any security tool you buy is the right one for your environment. There’s no substitute for your own evaluation in your own environment.

Also read: Top Endpoint Detection & Response (EDR) Solutions

Analyzing the Results

An earlier draft of this article relied on the methodology that Cynet had used to analyze the results (more on that in a moment). But after a couple of objections to that approach from readers, we’re returning to the simple and straightforward methodology we used last year, where we quantified protection and detection test results and averaged the two.

Of the 30 vendors who took part in the MITRE evaluations, 22 did both the Detection and Protection evaluations, so we’ll separate them from the Detection only group. And the data is publicly available, so anyone can access it and analyze it, which we would encourage you to do.

There were 9 broad tests in the Protection evaluation and 109 steps in the Detection evaluation, but those numbers dropped to 8 and 90, respectively, for vendors who didn’t participate in the Linux tests.

The 9 Protection tests also included 109 attack techniques. Cynet scored those tests based on how early in the attack path the threat was stopped. That’s an important way to look at the data, but the problem was it wound up scoring some vendors who eventually stopped a threat lower than some who missed it entirely. A rough example: A vendor who stopped one threat on the first step but missed another one entirely might score higher than a vendor who stopped both attacks on, say, the 5th step.

In a statement to eSecurity Planet, Cynet CTO Aviad Hasnis defended the company’s approach. “The early you stop an attacker – the more efficient you are as a vendor to prevent additional malicious activities from taking place. Think about a malware that steals credentials. If you stop the malware when it is dropped on the computer, nothing bad actually happens. Alternatively, if you stop it at a later stage, your credentials will already be stolen. This is exactly what MITRE tested.”

Perhaps there’s a way to scale the results to include both the depth of penetration and the broader stop/no stop score, but again, we encourage all who are interested in the tests to examine the MITRE data themselves.

Good Results for Cybersecurity

Twelve of the 22 vendors stopped all the Protection tests they faced, a promising showing for cybersecurity in general.

Seven of the vendors stopped all 9 of the Protection tests, including the one Linux test: Cybereason, SentinelOne, Cynet, Palo Alto Networks, CrowdStrike, Microsoft, and BlackBerry Cylance.

Another four vendors stopped all 8 Windows attack tests while skipping the Linux test: McAfee (now combined with FireEye as Trellix), Fortinet, VMware Carbon Black, and Deep Instinct. Trend Micro stopped 8 attacks, and the 9th was prevented from executing by a detection rule.

Below are the broad results for the vendors that participated in both the Detection and Protection evaluations:

| Protection | Protect % | Detection | Detect % | Overall% | |

| Cybereason | 9/9 | 100.00% | 109/109 | 100.00% | 100.00% |

| SentinelOne | 9/9 | 100.00% | 108/109 | 99.08% | 99.54% |

| Cynet | 9/9 | 100.00% | 107/109 | 98.17% | 99.08% |

| Palo Alto Networks | 9/9 | 100.00% | 107/109 | 98.17% | 99.08% |

| CrowdStrike | 9/9 | 100.00% | 105/109 | 96.33% | 98.17% |

| Microsoft | 9/9 | 100.00% | 98/109 | 89.91% | 94.95% |

| BlackBerry Cylance | 9/9 | 100.00% | 89/109 | 81.65% | 90.83% |

| McAfee | 8/8 | 100.00% | 107/109 | 98.17% | 99.08% |

| Fortinet | 8/8 | 100.00% | 87/90 | 96.67% | 98.33% |

| Trend Micro | 8/8 | 100.00% | 105/109 | 96.33% | 98.17% |

| VMware Carbon Black | 8/8 | 100.00% | 90/109 | 82.57% | 91.28% |

| Deep Instinct | 8/8 | 100.00% | 63/90 | 70.00% | 85.00% |

| Malwarebytes | 7/8 | 87.50% | 83/90 | 92.22% | 89.86% |

| Check Point | 7/9 | 77.78% | 103/109 | 94.50% | 86.14% |

| Cisco | 7/9 | 77.78% | 90/109 | 82.57% | 80.17% |

| FireEye | 6/8 | 75.00% | 89/109 | 81.65% | 78.33% |

| Broadcom Symantec | 6/9 | 66.67% | 92/109 | 84.40% | 75.54% |

| AhnLab | 5/8 | 62.50% | 83/90 | 92.22% | 77.36% |

| Sophos | 5/8 | 62.50% | 88/109 | 80.73% | 71.62% |

| Cycraft | 4/9 | 44.44% | 77/109 | 70.64% | 57.54% |

| ESET | 4/8 | 50.00% | 75/90 | 83.33% | 66.67% |

| Uptycs | 1/9 | 11.11% | 92/109 | 84.40% | 47.76% |

Many of those vendors also did well in last year’s results, demonstrating consistency across a range of advanced threats. Palo Alto’s performance in independent testing over the years has been so consistently strong that the company has been our top overall cybersecurity vendor for some time now, but 11 other vendors participating in the MITRE evaluations also made our overall top companies list. And we rank most of the MITRE participants on our top EDR products list, which we will update shortly.

Difficult Tests

The MITRE tests remain the most challenging a security vendor can face. The Detection tests emulated the Wizard Spider threat group that uses the Ryuk ransomware and the Russian Sandworm group behind NotPetya. The Protection steps looked at Emotet and TrickBot, Active Directory credential dumping, Ryuk, WebShell compromise, domain host compromise, and NotPetya.

Given the severity of the threats, the results are good news for the industry in general at a time when cyberwar has also become a concern.

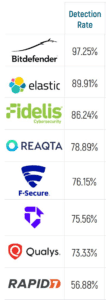

And to the right are the vendors that participated in the detection evaluations only. We’ve always taken the view that vendors should be applauded for participating in independent tests, both for the information that gives potential buyers and for the improvement that will result in cybersecurity products.

MITRE will soon follow with results for deception tools and security services, the organization’s first moves beyond endpoint security.

Read next: Top XDR Security Solutions